There’s a lot of noise on the internet. Reddit, Hacker News, tech blogs, keeping up with what actually matters in enterprise software is a full-time job. So I built a fully automated system that does it for me, runs in the cloud, is powered by AI, and was deployed end-to-end in less than two hours using Claude Code.

Here’s how.

What We Built (What Claude did mostly)

A C# Azure Function that runs every hour and:

- Fetches posts from configurable Reddit subreddits and Hacker News

- Filters for recency only posts from the last 7 days

- Deduplicates across runs never evaluates the same URL twice

- Applies an AI editorial filter Claude decides what’s genuinely newsworthy

- Writes curated results to Azure Blob Storage as timestamped JSON

The output is clean, structured JSON ready to feed into a newsletter, dashboard, or notification system.

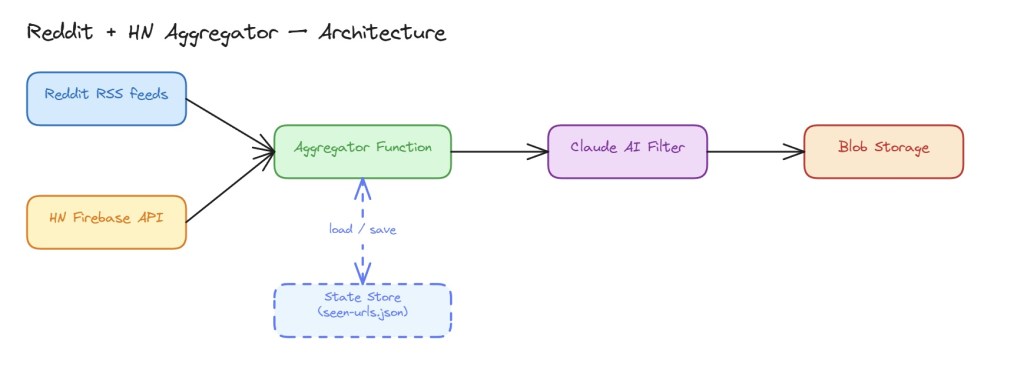

The Architecture

The system has three layers: data collection, AI filtering, and persistence.

Reddit RSS feeds ──┐

├─► Aggregator Function ─► Claude AI Filter ─► Blob Storage

HN Firebase API ───┘ │

└─► State Store (seen URLs)

Tech Stack

| Concern | Choice |

| Runtime | Azure Functions v4, .NET 8 isolated worker |

| Reddit data | Public Atom/RSS feed (r/{sub}/top.rss) |

| HN data | Firebase REST API |

| AI filtering | Anthropic Claude (claude-opus-4-6) via raw HttpClient |

| Storage | Azure Blob Storage |

| Schedule | NCRONTAB timer trigger |

Interesting Engineering Decisions

Reddit: RSS over JSON API

The Reddit JSON API (/top.json) started returning 403s without authentication. Rather than deal with OAuth, we switched to Reddit’s public Atom/RSS feed (no credentials required) and parsed it with System.Xml.Linq in a handful of lines. Simple wins.

Claude as an Editorial Filter

Instead of writing brittle keyword heuristics to judge whether a post is “real tech news,” we hand that job to Claude with a carefully crafted system prompt based on Editorial Guidelines:

A post qualifies if it is relevant to enterprise software development AND meets at least one of the following: Change, Innovation, or Emergent Ideas, and is not a minor patch release, pure marketing, or clickbait.

Claude receives posts in batches of 25, returns a JSON array of qualifying indices, and we map those back to posts. If the API is unreachable, the batch passes through unfiltered as a deliberate fail-safe so the pipeline never breaks.

We used structured JSON output (output_config.format.type = “json_schema”) to guarantee a parseable response every time, no regex needed.

Deduplication Without a Database

To prevent re-evaluating the same URLs across hourly runs (and paying for unnecessary AI API calls), we persist a rolling state file — state/seen-urls.json — in Blob Storage. On each run:

- Load seen URLs into a HashSet<string> for O(1) lookup

- Filter new posts against it

- After filtering, mark all new posts as seen (not just the ones that passed the AI filter — rejected posts shouldn’t be retried)

- Prune entries older than 7 days to keep the file small

No database, no Redis, no infrastructure overhead. A blob file is enough.

The AI Filter in Practice

A typical hourly run might look like this:

Fetched 312 posts from the last 7 days.

Deduplication: 47 new / 265 already seen (skipped).

Running news quality filter on 47 new posts…

News filter: 11/25 posts passed.

News filter: 9/22 posts passed.

Filter complete: 20/47 posts kept.

20 posts saved to 2026/03/24/09-00-01.json

Out of 312 raw posts, 20 make it through. That’s the kind of signal-to-noise ratio that makes a curated feed actually worth reading.

Deployment

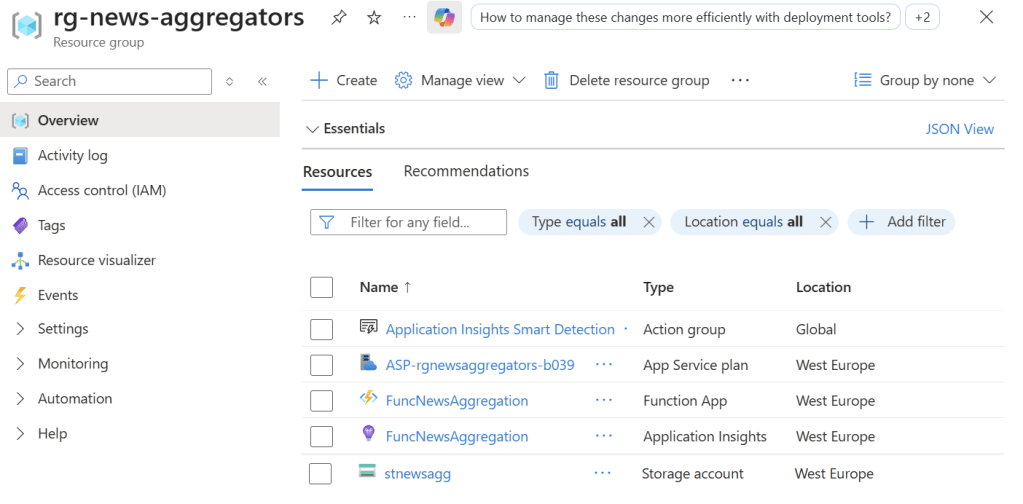

The whole thing deploys with two commands:

# Push app settings (API keys, schedule, etc.)

az functionapp config appsettings set \

–name FuncNewsAggregation \

–resource-group rg-news-aggregators \

–settings @appsettings.json

# Publish the function

func azure functionapp publish FuncNewsAggregation –dotnet-isolated

Done. The function is live, running on Azure’s infrastructure, costing pennies per day.

What’s Next

A few natural extensions:

- Email or Slack digest — trigger a Logic App when a new blob is written

- Web frontend — serve the JSON blobs as a read-only news feed

- Scoring — weight HN scores more heavily now that RSS drops Reddit scores

- More sources — dev.to, lobste.rs, or custom RSS feeds are easy to add

Takeaways

The most interesting lesson here isn’t the code, it’s the division of labor. Deterministic logic handles the mechanical work: fetching, deduplicating, and scheduling. The judgment call “Is this actually news?” goes to the model.

That separation keeps the system simple, cheap to run, and easy to adjust. Change the system prompt, and you change the editorial policy. No retraining, no feature engineering.

Two hours from idea to deployed function. That’s the pace at which you can build now.

All source code is C# targeting .NET 8. The function runs on an Azure Consumption plan and incurs roughly $0 in hourly costs well within the free tier.